save time through shell script unzipping multiple zip files

ubuntu shell bash utility javascript browser-addon newbie

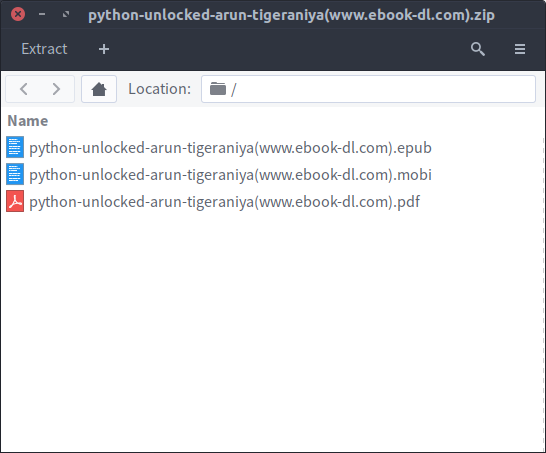

15 Dec 2016 I got carried away and downloaded lots of pdf files in zip files, so I needed a way to extract each file into one directory. I had to brush up on shell scripting to get the job done. Extracting a single file was becoming to tedious. Most of these zip files had either pdf, epub or mobi files. I prioritiezed pdf files.

My goal was to get the pdf files if there wasn’t one, I would take the epub and if that wasn’t included I would extract the whole zip archive into a specific dir. (out)

I got carried away and downloaded lots of pdf files in zip files, so I needed a way to extract each file into one directory. I had to brush up on shell scripting to get the job done. Extracting a single file was becoming to tedious. Most of these zip files had either pdf, epub or mobi files. I prioritiezed pdf files.

My goal was to get the pdf files if there wasn’t one, I would take the epub and if that wasn’t included I would extract the whole zip archive into a specific dir. (out)

First off I checked how many zip files I was dealing with about 148 zip archives

ls *.zip | wc -l

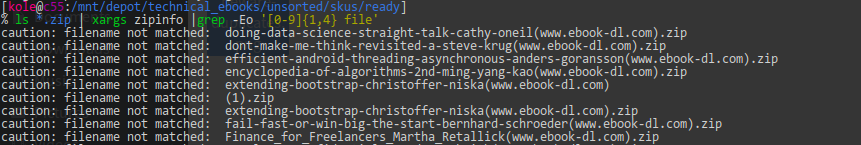

Guided by the assumption that if there was only 1 file it had to be a pdf file I hacked a script to fetch file count in a zipfile using zipinfo, then grep the number. If it had more than one file I could extract only the pdf file or else extract the single files. Started testing commands see if I could successfuly get the file count correctly.

ls *.zip | xargs zipinfo | grep -Eo '[0-9]{1,4} file'

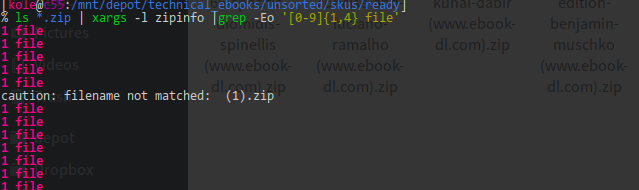

That didn’t work, zipinfo was expecting the arguments to be piped one at a time, so I added -l flag to xargs

That didn’t work, zipinfo was expecting the arguments to be piped one at a time, so I added -l flag to xargs

testing it out shows that It has problems with duplicate files names (1) so I wrote another line to get rid of duplicates

testing it out shows that It has problems with duplicate files names (1) so I wrote another line to get rid of duplicates

find -iname "*[0-9]*" -exec rm {} +

Things are moving on smoothly and I moved the snippet to float.sh

# !/bin/bash

# float.sh

for i in $(ls *.zip)

do

myvar=$(zipinfo $i | grep -Eo '[0-9] file' | grep -Eo '[0-9]')

if [ $myvar -gt 1 ]

then

unzip $i -d out "*.pdf"

else

unzip $i -d out

fi

doneFinally run the code ` ./float.sh ` Checked if the files I extracted were equal to the number of files ` cd out && ls | wc -l `. Surprise, surprise I had 145 files. Something wasn’t right wrote another script to use the zip file names to check if the files had already been extracted. That’s how I noticed that some zip files had single file but it was either misspelled e.g .Epub instead of .epub or it was a single .mobi file so It wasn’t getting extracted

checking if files had already been extracted

for i in $(ls *.zip)

do

str=$(echo $i | cut -c1-33 )

found=$(find out -iname "$str*")

# in bash empty strings == false & found returns the filename if found e.g out/file-name.pdf or ''

if ! [[ $found ]]

then

echo $i

fi

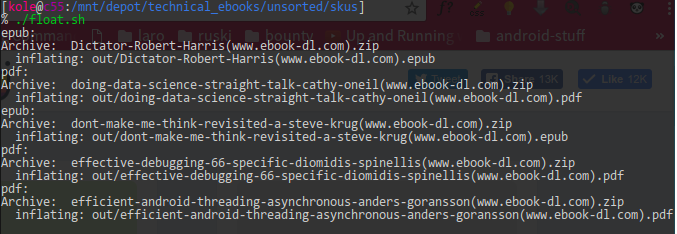

doneEnded up switching the logic to check if pdf, epub files exist in the zip and extracting them to the correct folder

improved logic

for i in $(ls *.zip)

do

haspdf=$(unzip -l $i | grep -o .pdf)

hasepub=$(unzip -l $i | grep -o .epub)

if [ $haspdf ]; then

echo "pdf: "

unzip $i -d out "*.pdf"

elif [[ $hasepub ]]; then

echo "epub:"

unzip $i -d out "*.epub"

else

echo "somme: "$i

unzip $i -d out

fi

done

Last but not least rename the files, remove the (www.ebook-dl.com)

rename -v 's/\(.*\)//' ./*

The biggest take away from this is that you should test your scripts with a few files and always carry out sanity checks. Test each command because one bad command will ruin the whole pipe sequence.

links:

- fixing xargs error

- use zipinfo to read filecount

- fetching numbers in grep

- remove files after calling find

- shell scripting basics

- bash arithmetic

- bash cheatsheet

- unzip to particular directory

- unzip specific extensions only

- finding strings withing string

- cutting strings in bash

- mass rename files

- case insensitive grep